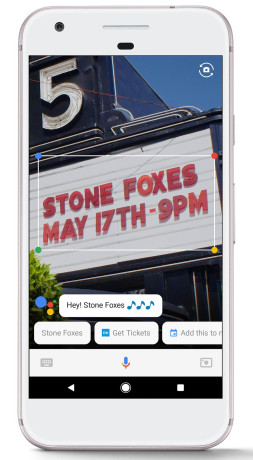

Google Lens Uses Machine Learning to Recognize Objects

May 17, 2017, 12:25 PM by Eric M. Zeman

Google today announced Google Lens, an image-recognition tool that relies on mobile cameras to perform searches. The tool is a significant advancement to the old Google Image Search app. Google says its neural networks are better than humans at recognizing objects. Using Google Lens, people can aim their camera at just about anything and Google will instantly perform a search and suggest results. For example, users can point their camera at a restaurant and immediately see the Google Search results for that restaurant, including reviews, hours, and location details. It can recognize object such as flowers, and much more. Google didn't say when Google Lens will be available.

Comments

No messages

iPhone 15 Series Goes All-In on USB-C and Dynamic Island

iPhone 15 Series Goes All-In on USB-C and Dynamic Island

iPhone 16 Brings More Features to All Price Points, Including New Camera Control

iPhone 16 Brings More Features to All Price Points, Including New Camera Control

Apple Watch Series 9 Detects Finger Gestures, Brings Siri On-Device

Apple Watch Series 9 Detects Finger Gestures, Brings Siri On-Device

Google Pixel 8 Series Saves the Best for the Pro

Google Pixel 8 Series Saves the Best for the Pro

Apple Intelligence Promises Personalized AI, Requires iPhone 15 Pro

Apple Intelligence Promises Personalized AI, Requires iPhone 15 Pro