How a Sprint Phone Is Born

If only it were as easy as giving a faceless lab tech a phone and letting him/her play with it for several hours to certify it on Sprint's network. Instead, each and every device goes through rigorous testing protocols in a process that consumes, on average, a full calendar year.

After the basic specifications and design are picked, the device goes through nearly a half-year of locking down the final set of features and initial development. Once working mock-ups are prepared by the manufacturer, they are handed off to Sprint for certification.

Basic Tests

Each phone faces 40 to 50 custom Sprint specifications. There are tens of thousands of requirement and test cases that need to be passed. Most phones have more than 100 million lines of code, with more than 1,000 components (baseband, antenna, processor, ports, display) that come from more than 150 different suppliers (Qualcomm, Sierra Wireless, Cisco, Asicion, et al.).

Each phone has 80 to 90 unique applications that need to be tested and certified to work properly; and each device has to support more than 100 business policy and support tools. Lastly, the devices have to work properly on three different domestic networks that are run by seven different network infrastructure providers (Alcatel-Lucent, Ericsson, etc.) This last number balloons to hundreds of networks and infrastructure providers if a device is global.

The browser on an average smartphone has to meet 1,800 requirements and goes through 1,600 individual tests. Wi-Fi radios go through hundreds of tests. Bluetooth goes through more than 500 tests, and GPS goes through more than 200 tests. Music features go through more than 150 tests.

Signal Tests

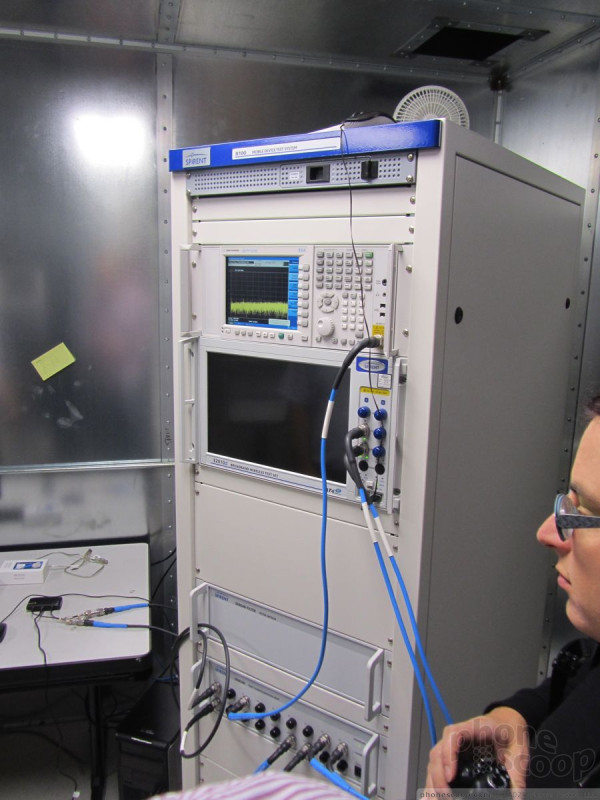

Device signal and RF performance is one of the core items tested on each device. Sprint devices go through more than 10,000 tests before they are certified to work on the Sprint network. The criteria they have to pass would astound you.

Cell phones and other devices are subject to 350 different wireless data tests and 30 different network authentication tests. Sprint uses several different facilities at its Overland Park headquarters to test RF performance. The phones are put in a chamber and surrounded by several dozen sensors to test sensitivity, power, connection, latency, and other factors affecting performance.

Things Sprint looks at include how well the device establishes a connection to the network itself and identifies itself, as well as speed on the network, call drops or blocks, and so on.

Then Sprint examines multiple handoff scenarios between various network types, depending on the state of the device. Is it active or dormant, is it roaming between CDMA and LTE, or CDMA 800 to CDMA 1900? ...and so on.

These technical tests are meant to ensure that each device is safe for the network (that it doesn't damage network performance) and that radio properties, RF properties, and RF performance criteria are met. Antenna, GPS, power save mode, global network selection, and network device data performance are all tested. These are all performed in a lab.

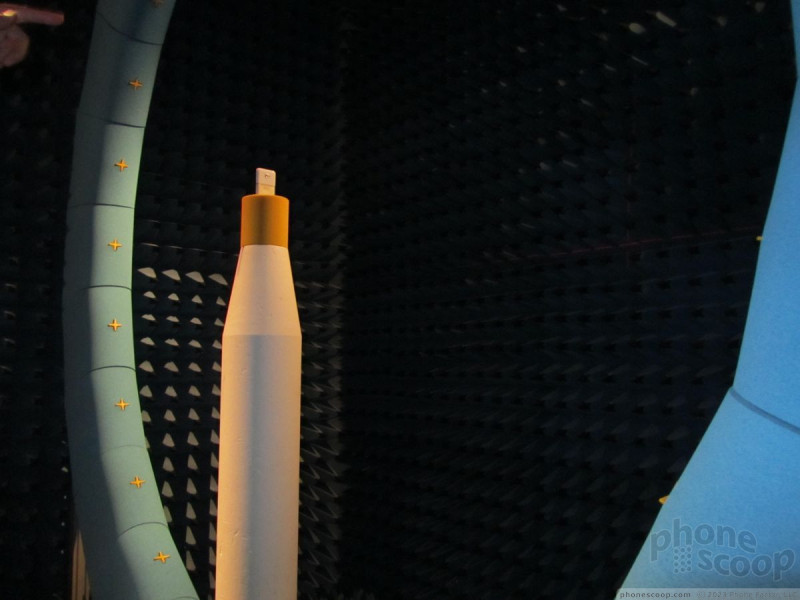

They also test the devices next to a mock human head, and in human hands with different grips to see if how the phone is held, or where it is positioned, plays a role in signal performance. The mock human head is filled with a liquid that simulates brain matter. Not only does Sprint test signal performance on the network when obstructed by human body parts, but it also looks at the specific absorption rate (how much cell phone radiation is absorbed by cells in the body), just like the Federal Communications Commission.

All the data is measured and processed by massive, refrigerator-sized pieces of equipment that are extremely sensitive.

Once a device passes all these tests in a lab, it then has to pass them out in the real world.

Sprint has several drive loops that it takes its devices on to see how well they perform handoffs between towers and hand-offs between network types (CDMA-to-LTE), and so on. There's one loop in Overland Park, one in Chicago, and one in Branchburg, NJ.

The real-world drive tests measure quality of voice calls, call drop rates, and call block rates. They measure the time it takes to send and receive text, picture, and video messages. They test the time it takes to route GPS-based directions, how accurate the navigation is, and how effective re-routes are. Drive tests measure how well video streams across the nework, and has to pass criteria such as time to first frame, stall rates, and artifacting. Last, drive tests really take web browsing to task. They measure the time it takes to render pages on a device, how quickly pages are delivered to the device, and how quickly the network responds to the request. Sprint has its own set of test pages, and also tests against the top 10 internet sites.

App Tests

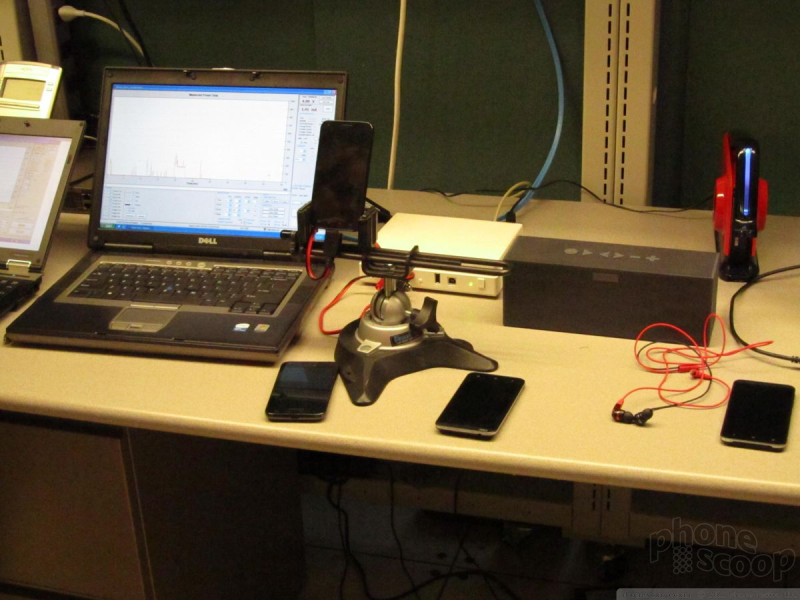

Sprint can't afford to give every application developer a full slate of its devices, but it has to let developers test applications somehow. Sprint has a really neat set of tools available that allow developers to access and use an actual device that is stored in Sprint's lab. Here's how it works.

Registered developers are given software that can hook into Sprint's test facilities. The software is used to access the phones remotely, which are live and hooked into Sprint's backend. Using the software, application developers can access and run (not simulate, really run) any feature or application on the device. The developer can activate all the buttons, rotate the screen, and perform any action that the hardware itself can do.

With this level of access, the developer can then install their application to see how it will perform on Sprint's network. They are able to activate each and every facet of their own application and test it against the device and its capabilities.

Sprint doesn't have an endless supply of these devices, either. In fact, developers have to reserve the devices ahead of time. When one is being used, no one else can access it. What's really interesting is that the devices stretch back years, across Sprint's entire catalog of devices. We spied some pretty old phones sitting in Sprint's rack gear that were being used to test Java and other apps, all remotely. Neat.

Battery Test

Battery testing is one of the last things to occur in the certification process. The manufacturer gives Sprint guidelines as to what it expects from the device, and once the code is finalized and the signal performance is where it needs to be, the devices are run through a battery (pun intended) of tests.

The tests are a bit boring, in reality. Rather than actually use the devices as your or I would to test the battery life, they are hooked to computers via cable and run software tests. The most important tests are for talk time and standby time. According to Sprint, near-final devices almost always measure up to expectations when it comes to battery life. It is very rare for a device to have significant battery problems.

But Sprint really does test everything. The certification software controls the device to perform every function, using all the radios and features.

Scope

The entire certification process at Sprint takes anywhere from 12 to 15 weeks, or between three and four months. Major problems are tackled first and must be corrected before devices ship. Minor problems discovered near the end of the certification cycle can hold up the launch, or be slated for fixes after launch via maintenance releases. Nearly every phone ships with known problems that will be corrected after launch.

From start to finish, each phone is developed and certified by about 350 to 400 people worldwide. Sprint's device team and senior management discuss the progress of each device at a meeting every single week.

Sprint took pains to point out that the time it takes to prepare a new version of the operating system for a single phone takes just as much work — and time — as creating the original build of the device. That's 12 to 15 weeks, if there aren't any problems. In other words, if a device ships with Android 2.3, but will be updated to Android 4.0, it takes Sprint three to four months to test, certify, and prepare the update.

Snapdragon 8 Gen 1 is Qualcomm's New Flagship Chip

Snapdragon 8 Gen 1 is Qualcomm's New Flagship Chip

TCL's New Foldable Concept Swings Both Ways

TCL's New Foldable Concept Swings Both Ways

Samsung S24 Series Adds More AI, Updates the Hardware

Samsung S24 Series Adds More AI, Updates the Hardware

Apple Expands Wallet To Support Gov't IDs and Home Keys

Apple Expands Wallet To Support Gov't IDs and Home Keys

New Emoji Finalized

New Emoji Finalized